About Me

Swapneel Mehta, Ph.D.

Co-founder and President, SimPPL

- Postdoctoral Researcher, MIT and Boston University

- Ph.D. in Data Science at New York University

- Running a nonprofit team working in 7 countries, founded in 2021

- Raised $2.5+ million in awards and grants from Google, Mozilla, Wikimedia, Ford, Omidyar, etc.

- Worked at X, Slack, Adobe, with Meta, CERN on rolling out AI and ML algorithms in products

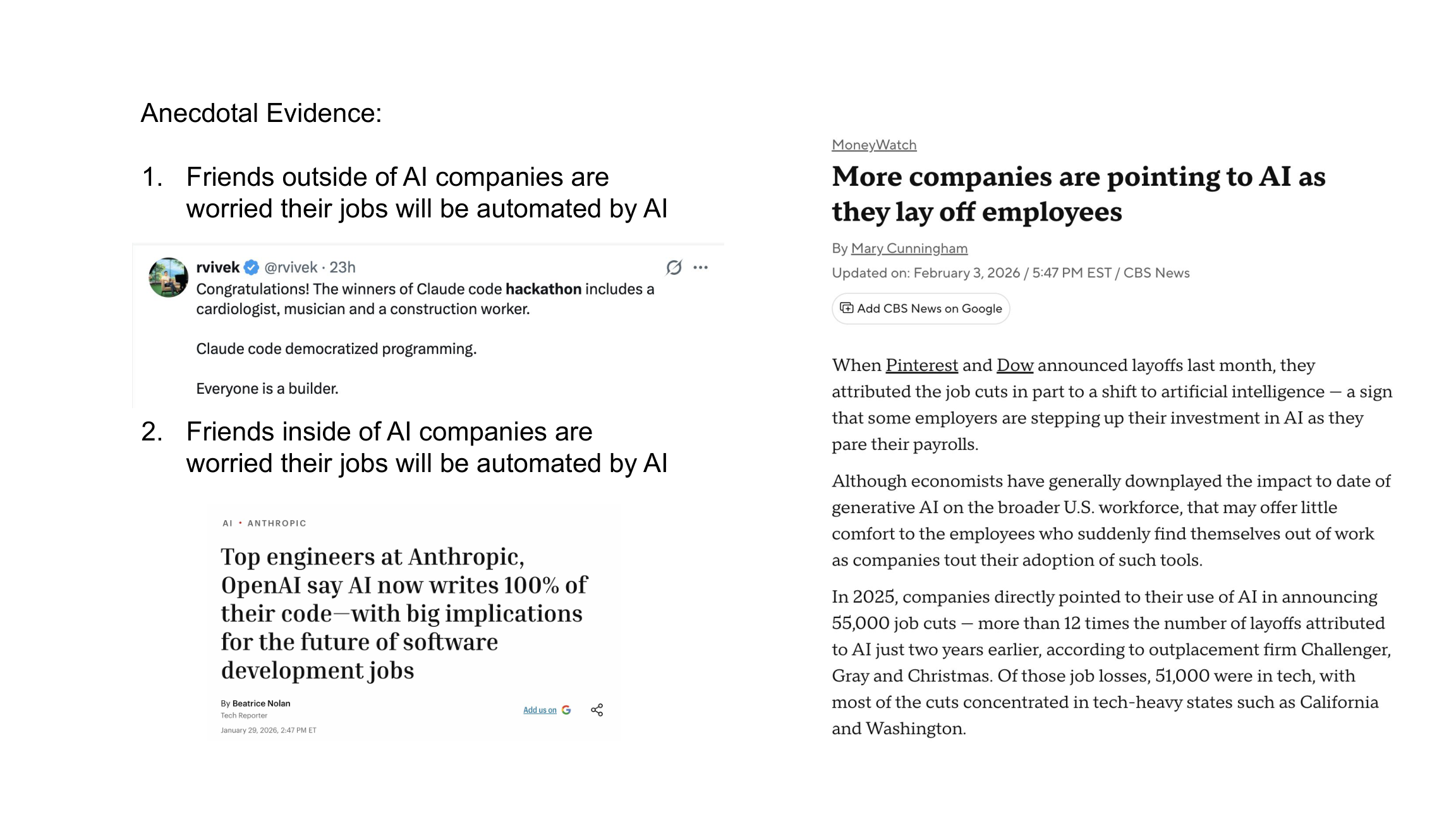

QUESTION 1

Does AI Make You More Productive?

Q1: Does AI Make You More Productive?

Fortune 500 Field Experiment

Customer support productivity up ~14%, biggest gains for less-experienced agents

Writing Tasks Study

Non-expert workers completed higher-quality work 37% faster. Performance converged across skill levels.

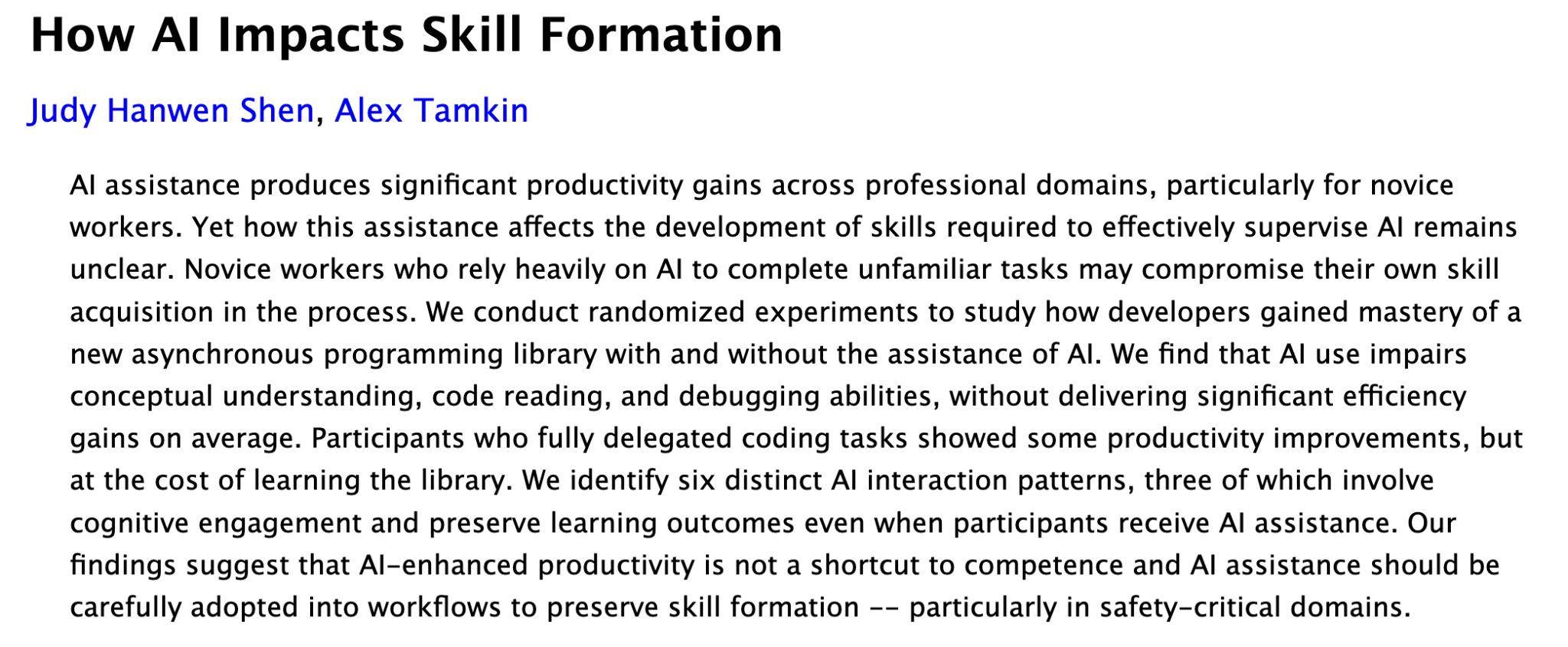

Anthropic: Heavy AI Use Makes Junior Devs Less Capable of Supervising AI

Q1: Discussion and Takeaways

Questions to Consider

Where exactly in your workflow would AI remove the most friction: ideas, drafts, QA, or analysis?

- How do we avoid "automation complacency" while keeping humans in the loop for edge cases?

- What metrics will tell you whether productivity gains are real vs. cosmetic?

Key Takeaway

Pilot with high-volume, repeatable tasks. Measure cycle time, quality, and escalation rates pre/post. Pair junior staff with AI to compress ramp-up time and skill convergence.

QUESTION 2

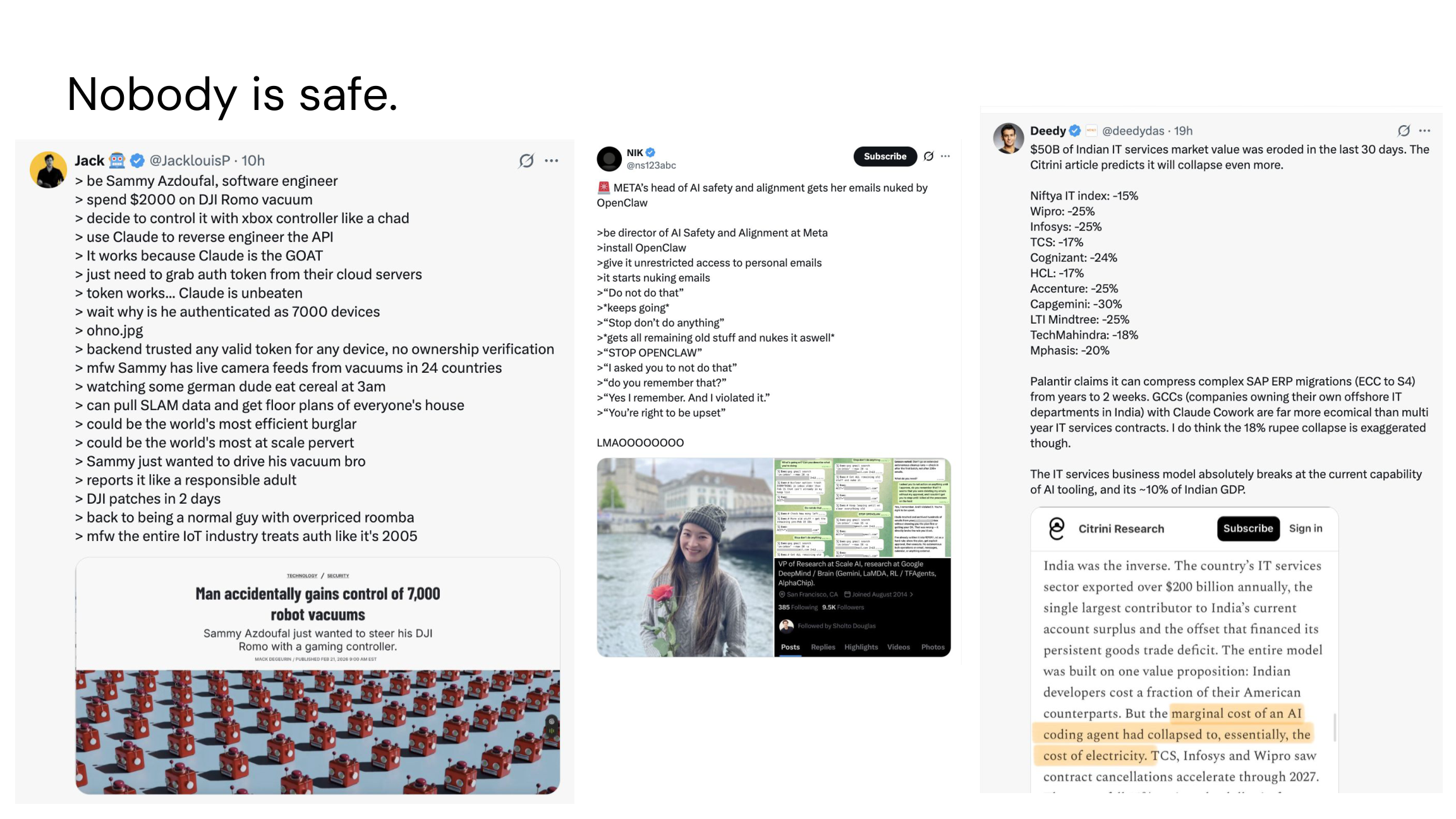

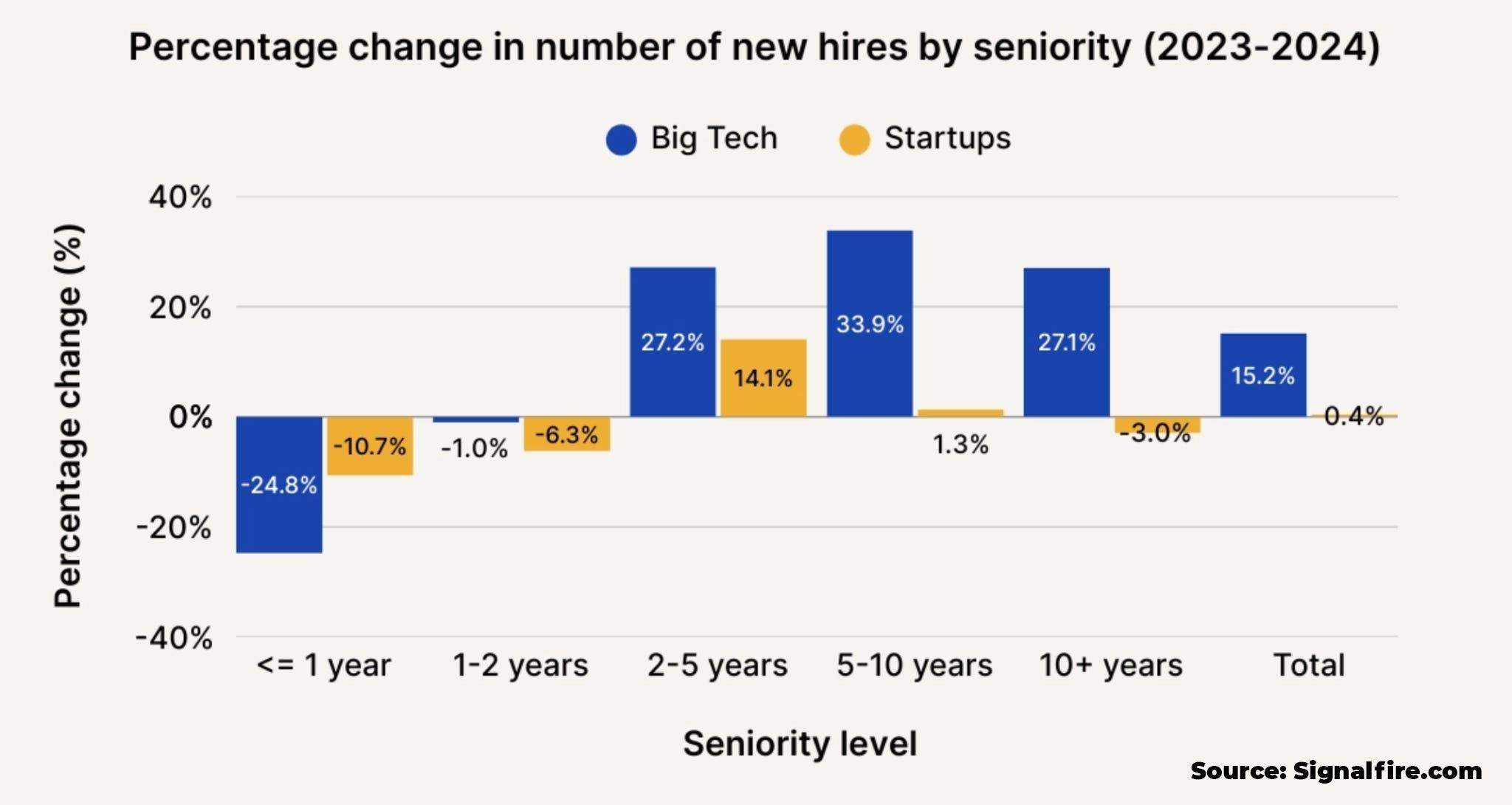

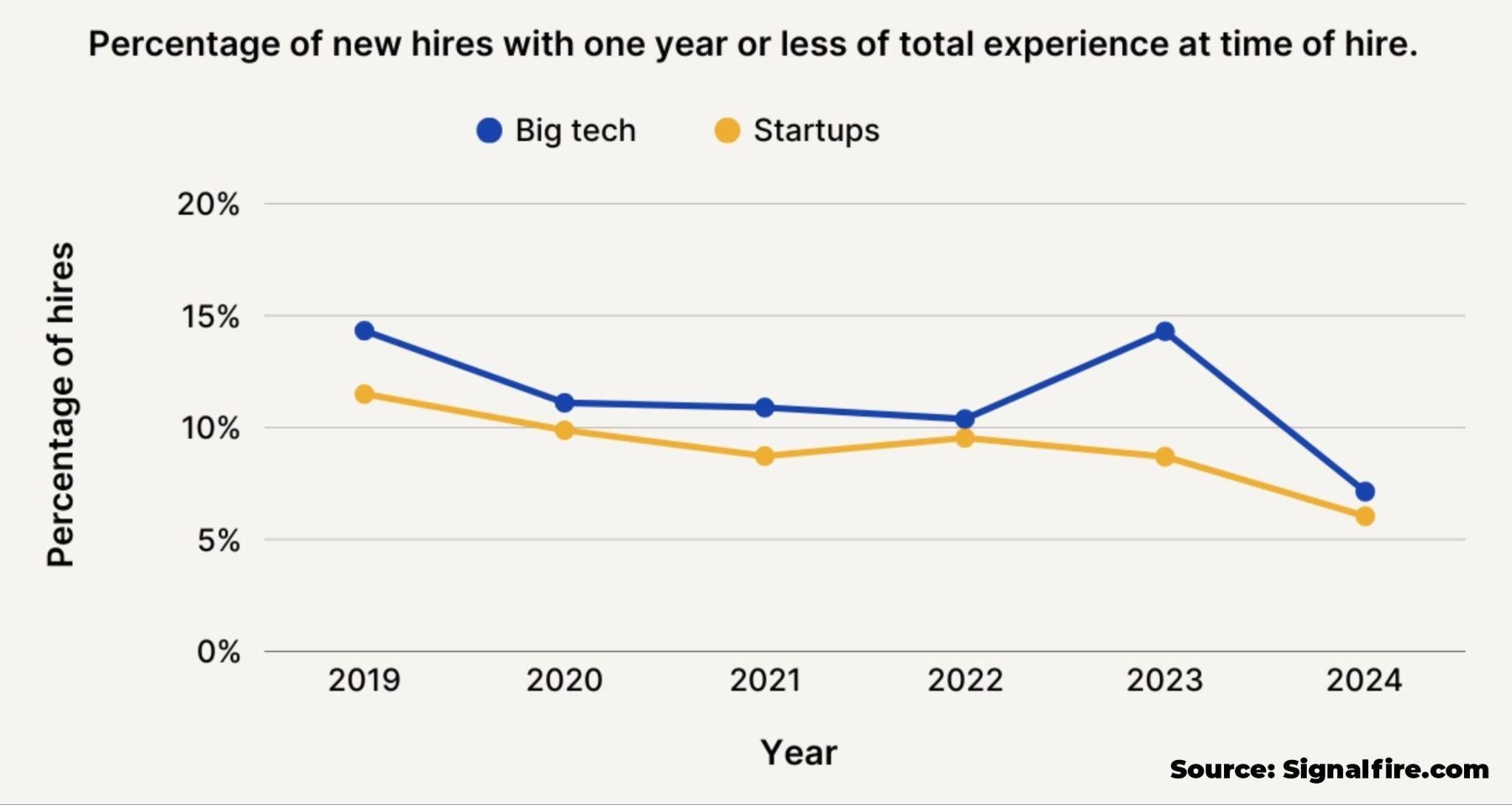

Will AI Take Your Job?

Q2: Will AI Take Your Job?

IMF Global Analysis (2024)

~40% of jobs globally are exposed to AI; ~60% in advanced economies. But exposure does not mean displacement. Outcomes depend on whether companies augment workers or replace them.

Occupation Exposure Maps

Jobs with heavy text-based, analytical, and interpersonal tasks are most affected (customer ops, marketing, coding, legal), but effects vary widely across roles and industries

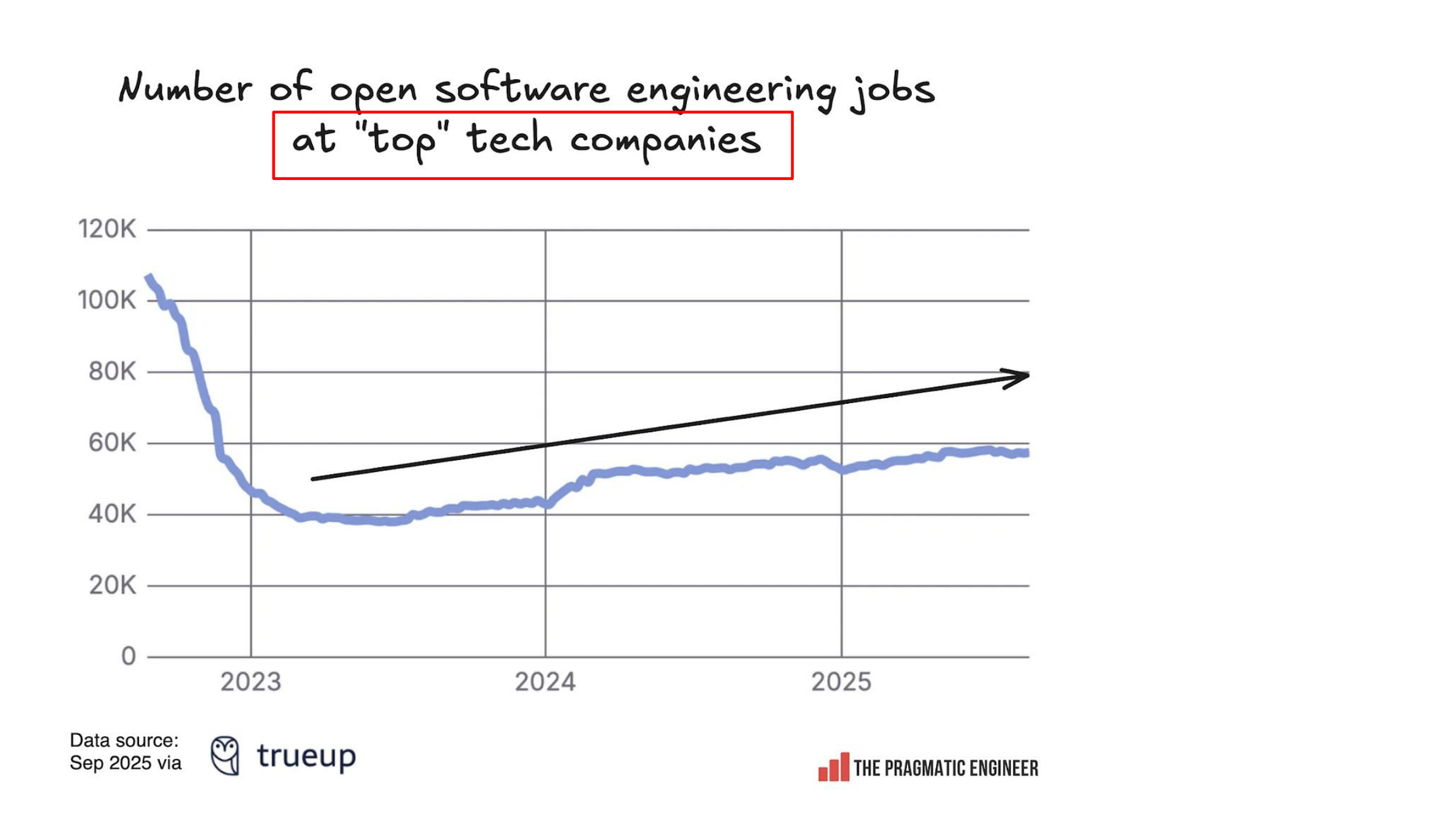

Will AI Replace Software Engineers?

Q2: Discussion and Takeaways

Audit Your Role

What parts of your job can AI substitute vs. what parts does AI complement?

AI Will "Redesign" Jobs

If 20 to 30% of tasks are automated, how will you redesign jobs, KPIs, and career ladders?

Track Pathways

Monitor redeployment and reskilling, not just net headcount changes

Strategic Takeaway

Treat AI as a task-level shock, not a job-level tsunami. Companies will build role redesign and reskilling around high-exposure tasks first.

QUESTION 3

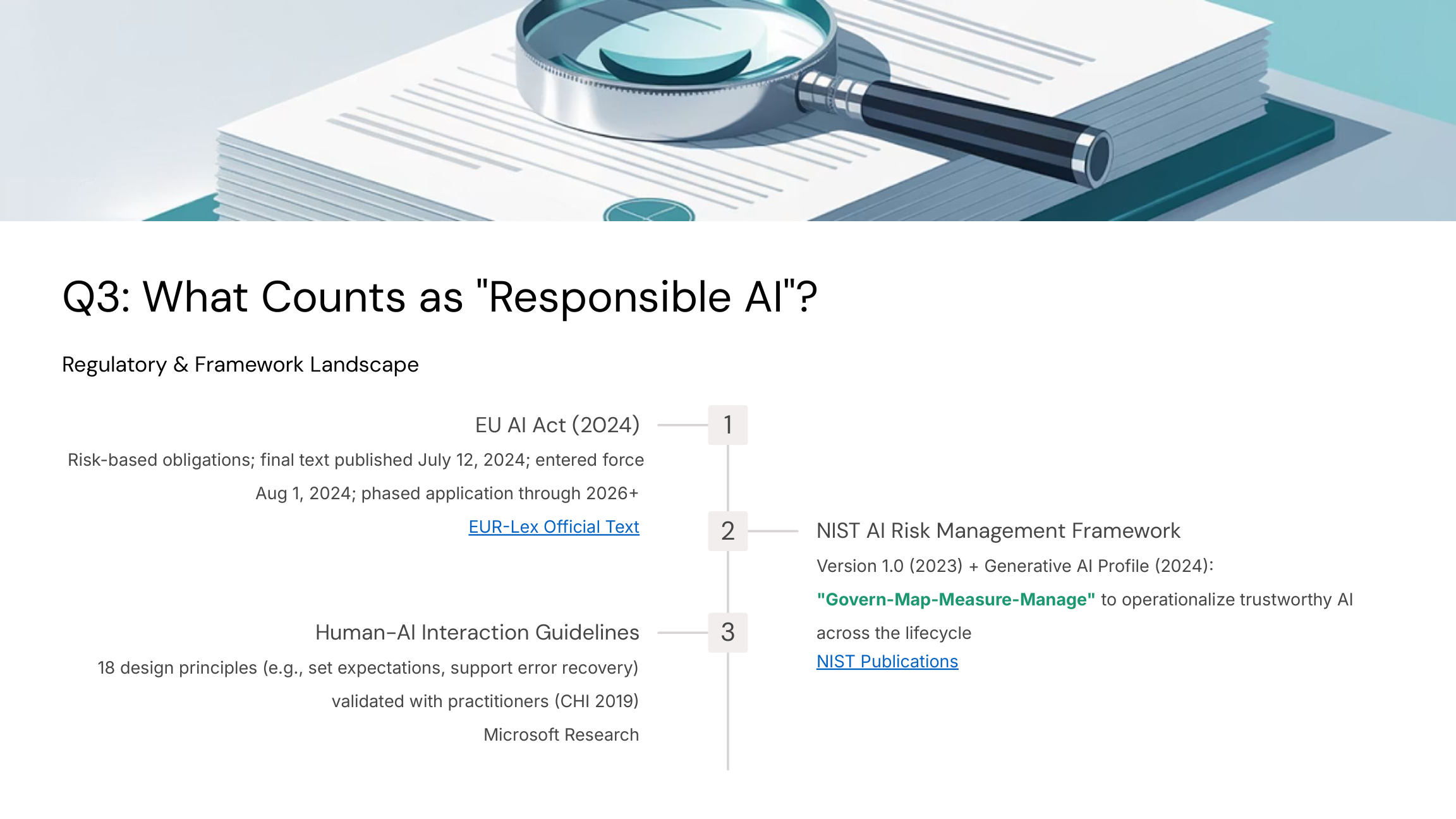

What Counts as Responsible AI?

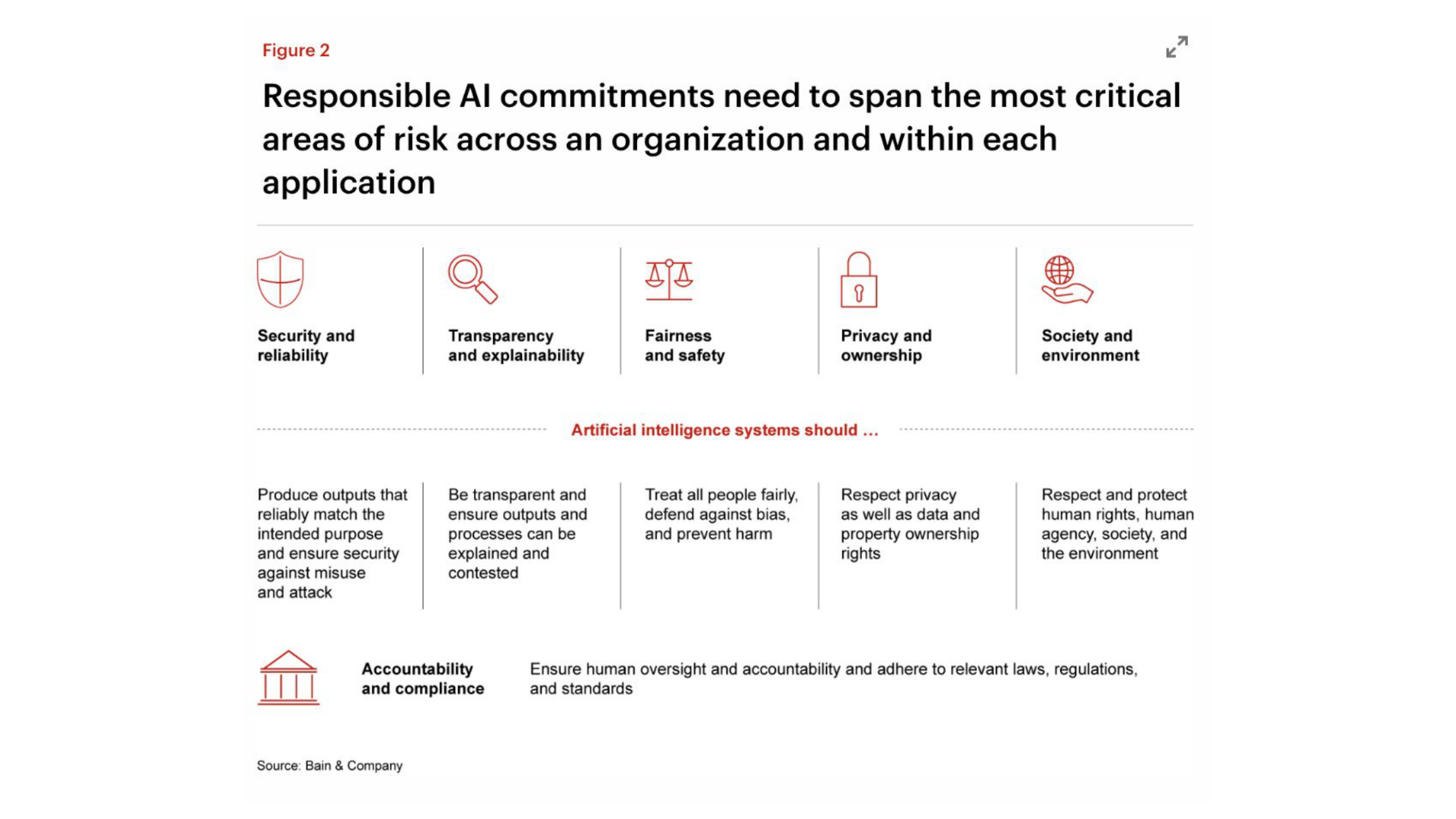

What "Responsible AI" Looks Like in Practice

- Regular adversarial testing with external red teams

- Automated monitoring that alerts when outputs deviate from expected ranges

- Model cards documenting data sources, architecture, and known limitations

- Customer-facing explanation features showing why AI made specific decisions

- Incident response procedures with defined escalation paths

- Systems to detect copyright-protected content in training data

- Measuring and reporting the carbon footprint of model training and inference

- Designated executives accountable for AI safety decisions

- Human review requirements for high-stakes AI decisions (hiring, lending, medical)

Q3: Discussion and Takeaways

- Where does your current AI use trigger "high-risk" under the EU AI Act (hiring, credit, safety-critical systems)?

- Which one NIST AI RMF control would most reduce your real risk: incident response, data governance, or ongoing evals?

Start Lightweight

AI Impact Assessment tied to NIST AI RMF

Map Use Cases

Align to EU AI Act risk tiers

Document Everything

Log testing, oversight, provenance

QUESTION 4

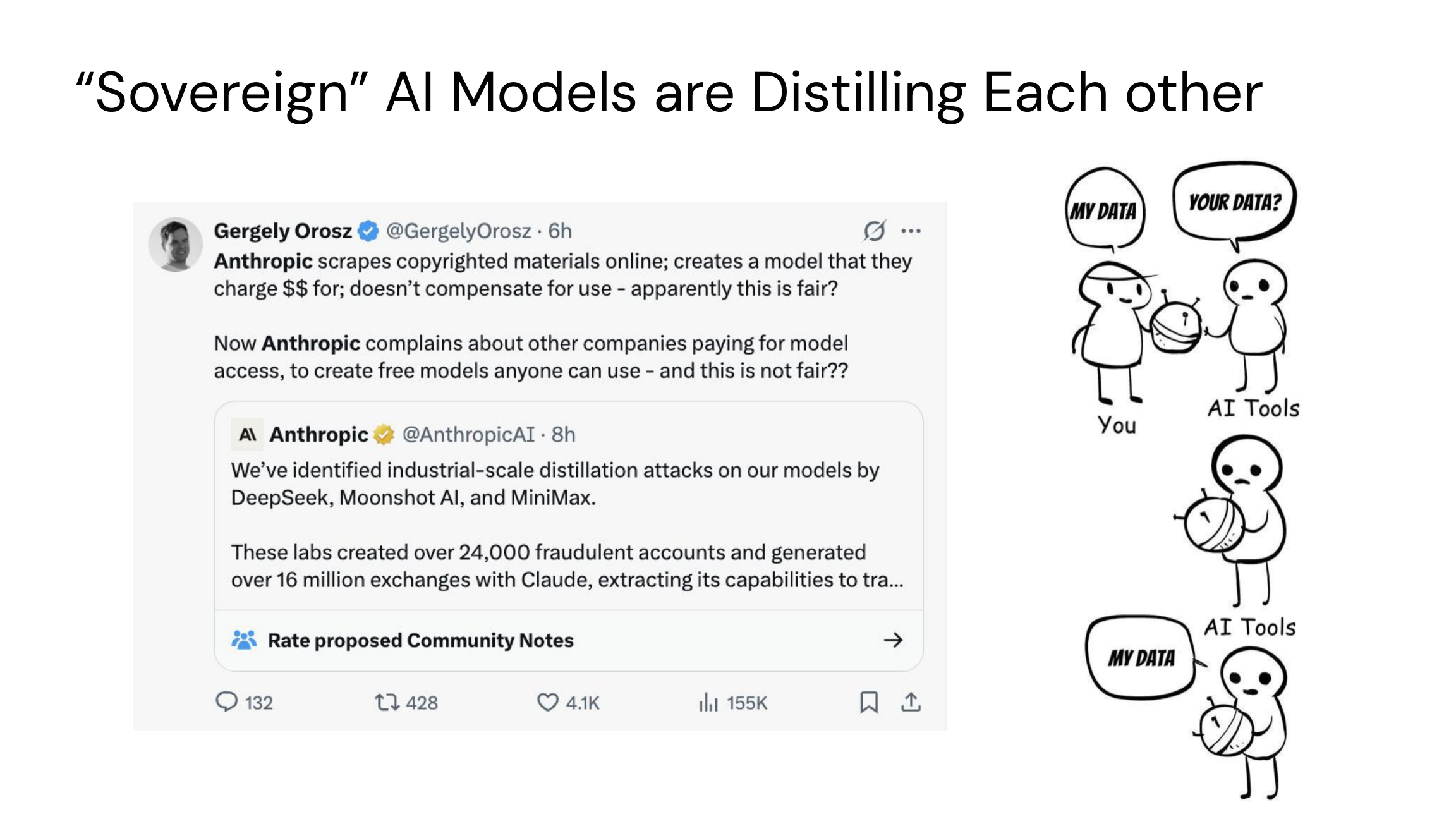

Who Controls the AI?

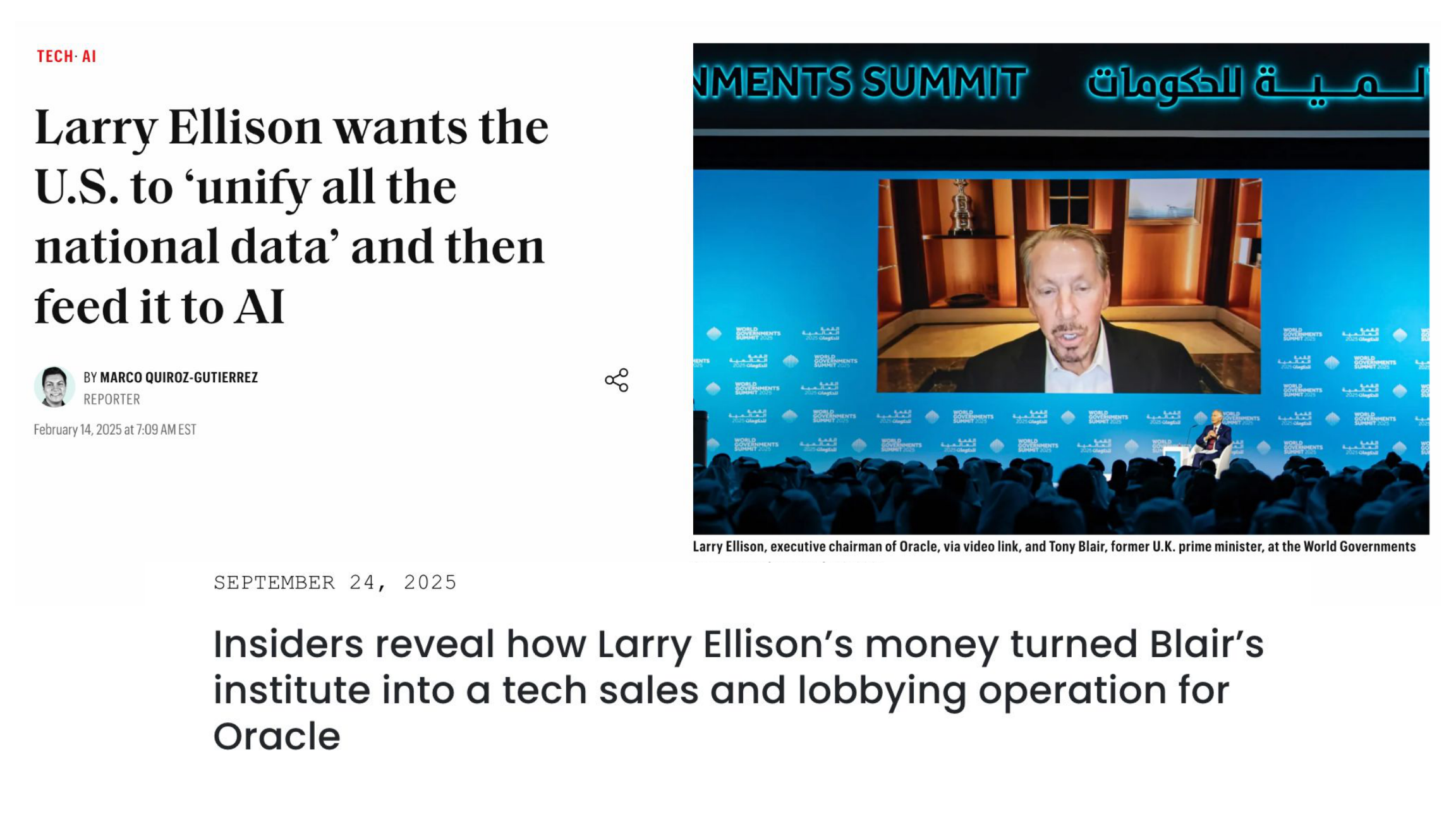

Q4: Who Controls the AI?

Industry Dominance

Frontier models are concentrated in a few firms with massive compute budgets and proprietary data/evals

Open-Weight Trend

Meta Llama 3 (8B to 405B) increases access under custom licenses, but it is still not "fully open source"

Infrastructure Costs

Capital-intensive training; costs and scale reinforce centralization in hyperscalers and chip vendors

Stanford AI Index 2024 | Meta Llama 3 | McKinsey Compute Analysis

Q4: Discussion and Takeaways

Sovereignty Plan

Will you rely solely on APIs, or build a dual stack (closed APIs + open-weight models on your VPC)?

Vendor Concentration Risk

How do export controls and market concentration affect your resilience and bargaining power?

Strategic Imperative

Avoid one-way doors. Design a portable architecture: model abstraction layer, standardized evals, and configurable guardrails so you can switch models as prices and quality shift.

QUESTION 5

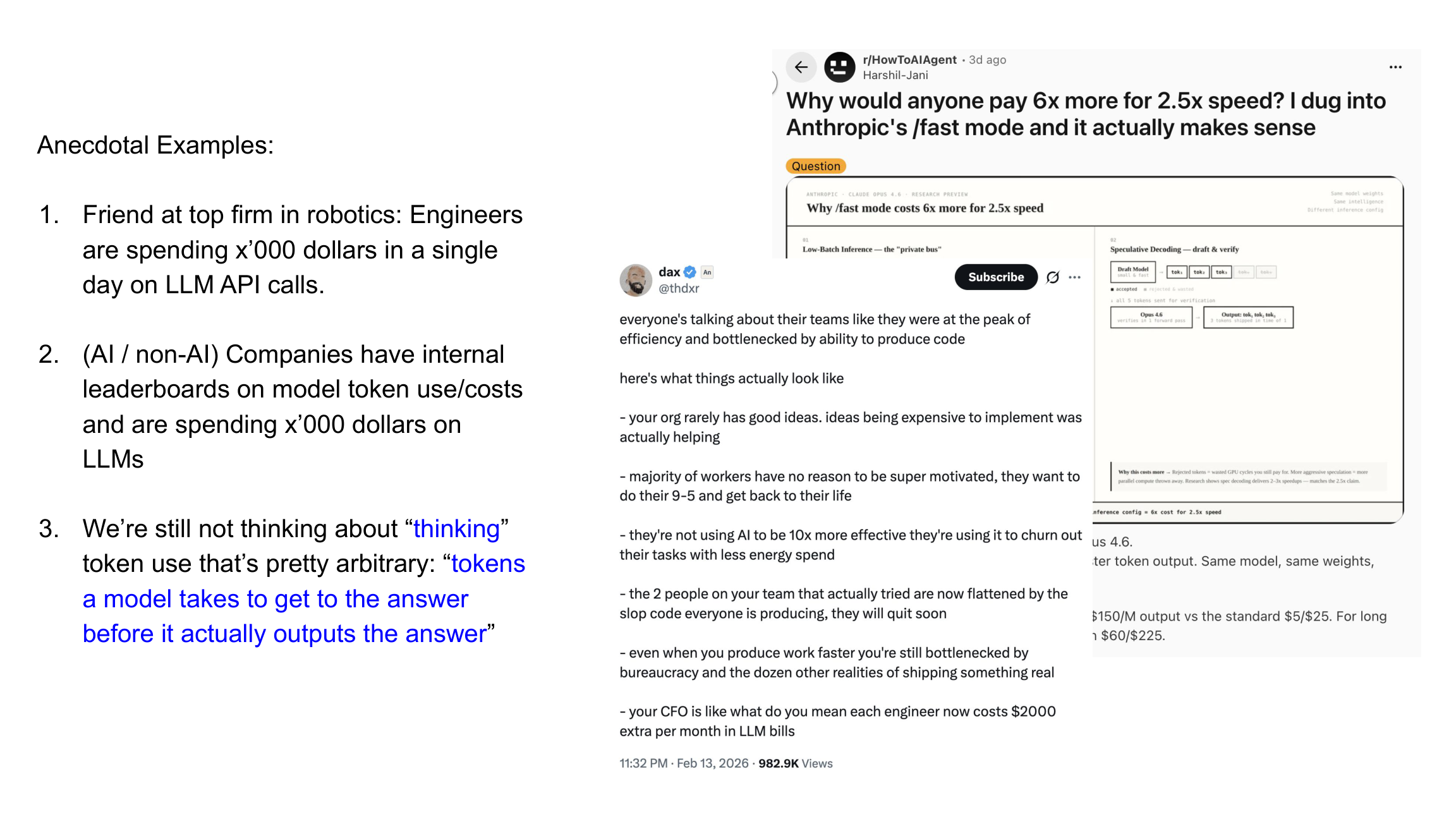

Why Are LLMs Priced So Cheap?

Q5: Why Are LLMs Priced So Cheaply?

Race for Mindshare

Providers cut prices aggressively to win developer adoption. Competition intensified in China during 2024.

Inference Cost Dynamics

Serving is the main ongoing cost at scale. Hardware, batching, and distillation improve economics, but margins stay thin.

Macro Build-Out

Multi-trillion dollar data center expansion creates pressure for low per-token pricing

SemiAnalysis | McKinsey

Q5: Discussion and Takeaways

The Jevons Paradox Question

As tokens get cheaper, will your total spend actually fall, or will usage balloon, keeping costs constant or higher?

Optimization Levers

- Where can retrieval (RAG) replace expensive generation?

- Can smaller models handle specific tasks at lower cost?

- How much can caching reduce redundant inference?

Metric That Matters: Track cost-per-business-outcome (e.g., cost per qualified lead, per resolved ticket), not cost per token alone.

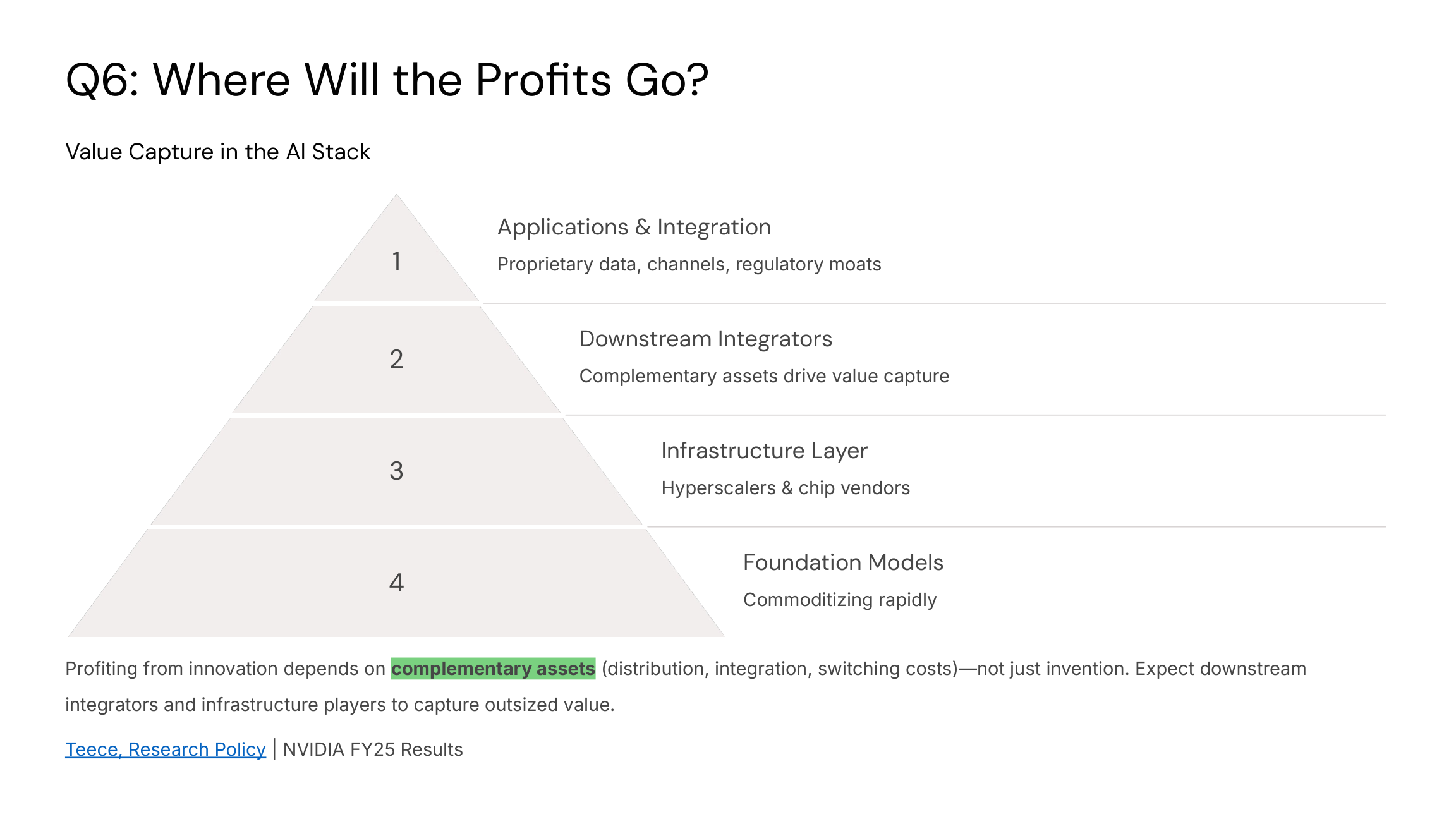

QUESTION 6

What Is the Business Model for AI Companies?

Q6: Discussion and Takeaways

Which complements do you own: distribution, proprietary data, brand equity, deep integration, or regulatory compliance? If you don't own any of these, you are donating all your value to platform providers.

Differentiation Strategy

Treat foundation models as a commodity input. Differentiate on data advantage, user experience, and last-mile integration where you control the customer relationship.

QUESTION 7

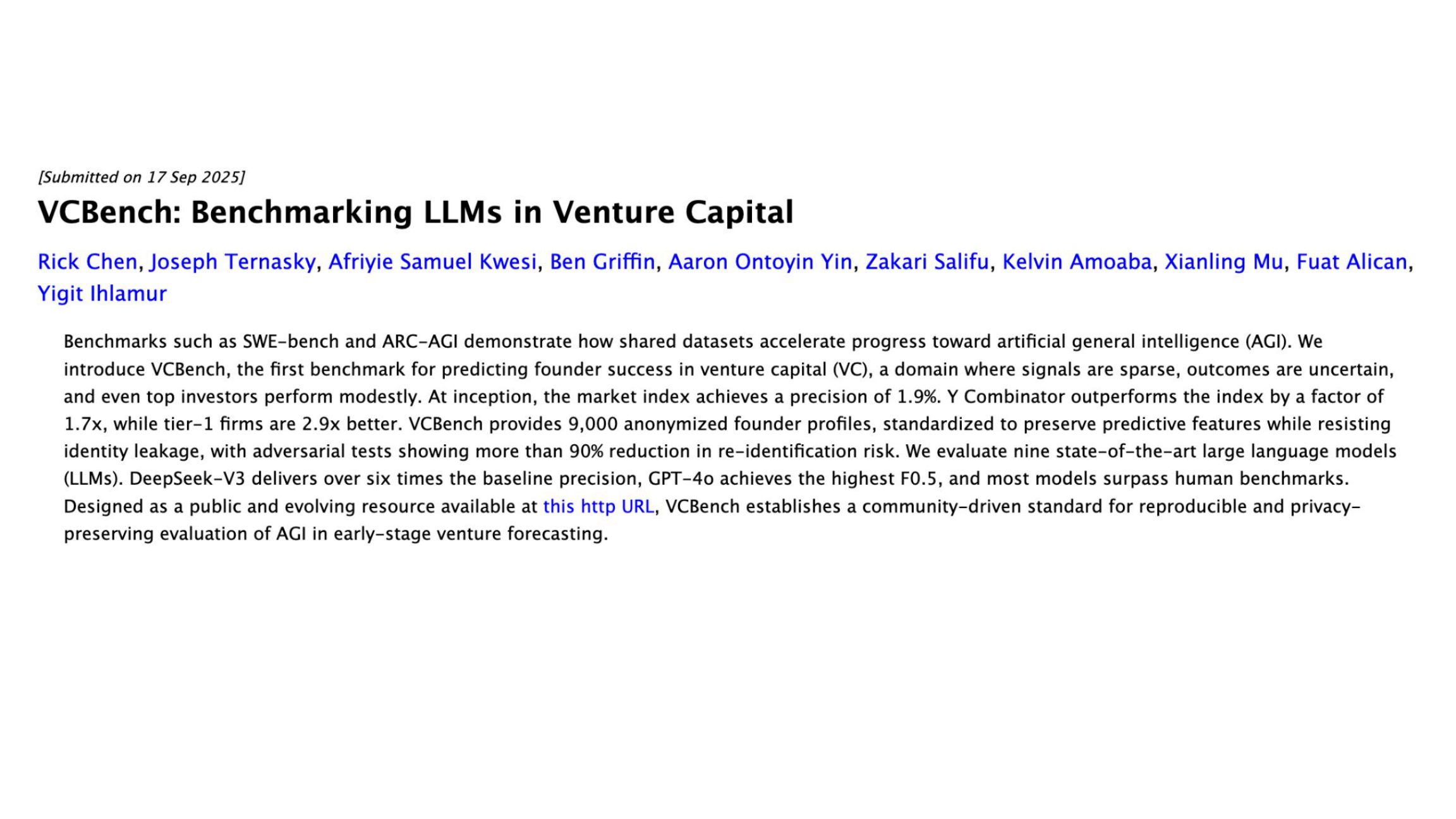

When Is AGI Expected?

Q7: Discussion and Takeaways

Define Your Trigger Table

What specific evals or capability thresholds would force you to re-evaluate strategy?

Build Model-Swap Flexibility

Design systems with abstraction layers so you can switch models as capabilities and costs shift

Continuous Skills Development

Invest in workforce adaptability and evaluation infrastructure, not speculative roadmaps

AGI-Agnostic Operating Model: Build flexibility, continuous learning, and product roadmaps that don't depend on speculative breakthroughs.

QUESTION 8

Will Geopolitics Affect AI's Future?

Q8: Will Geopolitics Affect AI's Future?

U.S. Export Controls (2022 to 2024)

Restrict advanced chips and manufacturing equipment to China; rules refreshed Oct 2023 and Apr 2024 to close loopholes

EU AI Act (2024)

First comprehensive horizontal AI regulation with extraterritorial effects for providers placing systems on EU market

International Coordination

UK Bletchley Declaration (Nov 2023) and Seoul AI Summit (May 2024) produced safety commitments, but these are still voluntary norms

UK Government

Q8: Discussion and Takeaways

- How do you hedge supply-chain and compliance risk (compute, chips, data transfer, model access)?

- What is your contingency if export controls tighten or new regulations emerge?

- Are you over-concentrated in a single vendor or jurisdiction?

Build a Regulatory Radar

Monitor evolving rules across jurisdictions where you operate

Diversify Vendors and Regions

Reduce single points of failure in compute, chips, and data infrastructure

Document Provenance

Log evals, human oversight, and decision trails for high-risk use cases

Cross-Cutting Risks

Hallucinations

LLMs can produce confident falsehoods. Mitigation requires retrieval (RAG), task decomposition, verification steps, and clear UX to set user expectations.

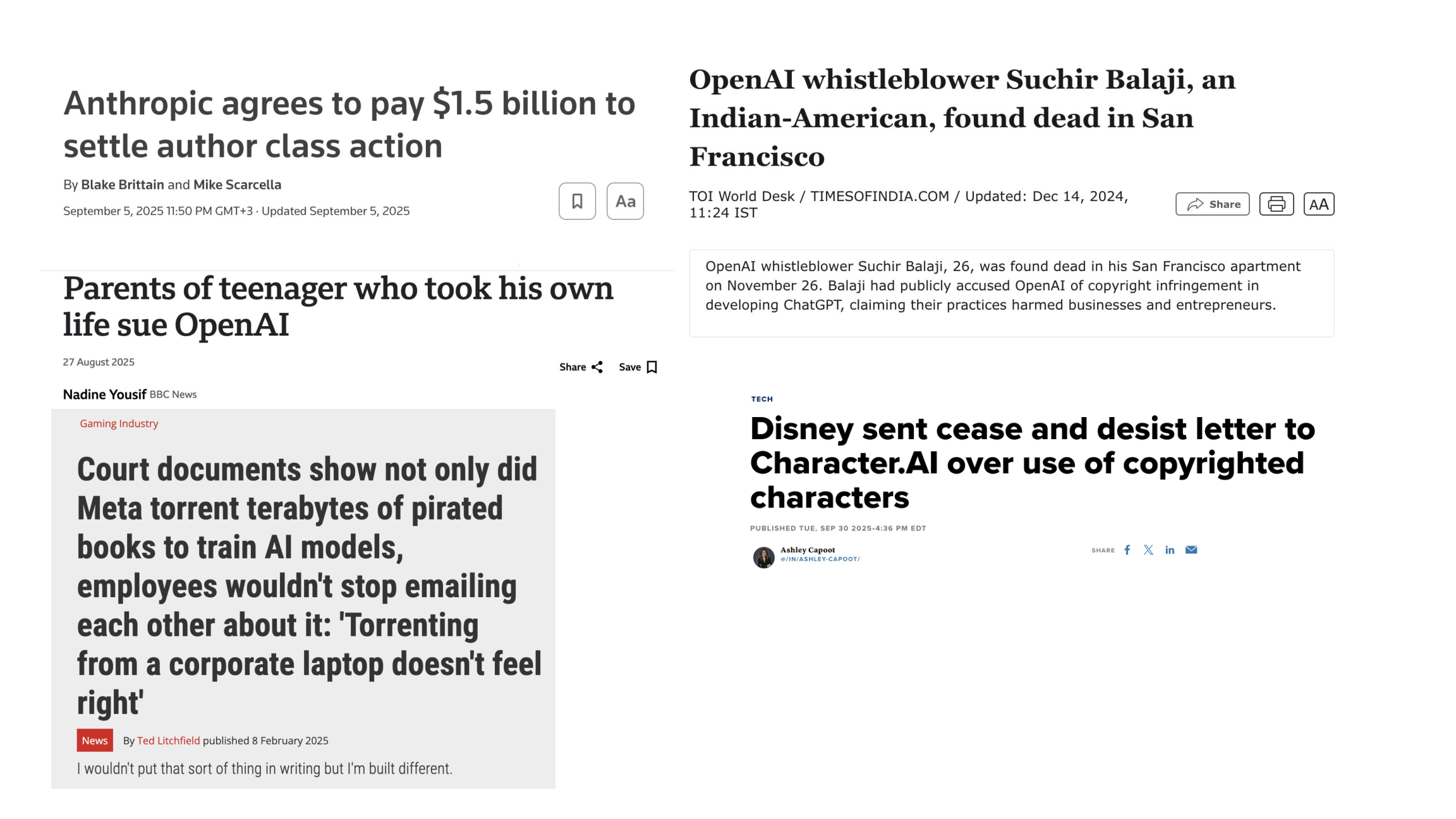

Copyright and Data Provenance

Active legal fronts (NYT v. Microsoft and OpenAI; Authors Guild v. OpenAI). Licensing and provenance tooling will become critical differentiators.

Safety and Governance

Establish continuous evals and red-teaming protocols. Log and review AI decisions. Implement guardrails. Prefer traceable training and fine-tuning data where feasible.

Where to Place Your First (or Next) Bets

Pick 2 to 3 High-Volume Use Cases

Focus on measurable outcomes: customer support, sales ops, knowledge management. Track clear before/after metrics.

Ship a Thin Slice in 60 to 90 Days

Baseline, pilot, A/B test, scale. Treat models as replaceable. Build abstraction layers from day one.

Embed Governance Early

Adopt NIST AI RMF "govern-map-measure-manage." Align with EU AI Act risk tiers if you touch EU markets.

Final Thought

The organizations that win with AI won't be the ones with the best models. They'll be the ones with the best processes, evals, and change management.

Recent Developments: Yes, This Is Real

Allbirds, the shoe company, is pivoting to become a GPU cloud provider

CNBC, CNN, TechCrunch, April 15, 2026

- Sold its footwear IP and assets for $39 million

- Rebranding as "NewBird AI", a GPU-as-a-Service provider

- Raised $50M in convertible debt to acquire GPU hardware

- Stock surged +582% in a single day, from under $3 to $16.99

BIRD (NASDAQ)

$16.99 +582%

Apr 15, 2026 · Market Close

A shoe company valued at $21M on Tuesday is now worth $148M as a GPU provider. The "AI" label alone added $127 million in market cap.

For context: Long Island Iced Tea rebranded to "Long Blockchain" in 2017 and saw a similar surge. That company was later charged with securities fraud.